-

Xise Eliza App Controlled Thrusting Realistic Vaginal Stroker - FleshXise Eliza is an app-controlled thrusting stroker featuring realistic 3D textured channels and dual motion technology. At 8.5 inches insertable length, it delivers three thrusting speeds combined with seven vibration modes, controlled via smartphone app with video recognition sync. It's suited for people seeking...

- $129.99

$139.99- $129.99

- Unit price

- per

-

Xise Sora App Control Vibrating Realistic Vaginal Masturbator - FleshXISE Sora is an app-controlled thrusting masturbator featuring dual-action motion synchronized to video content through intelligent recognition technology. Measuring 8.5 inches insertable length with a richly textured internal channel, it delivers multiple thrusting speeds plus vibration modes. The IPX7 waterproof design and magnetic charging...

- $129.99

$139.99- $129.99

- Unit price

- per

-

Zero Tolerance HOLD ME TIGHT Vibrating Automatic Stroker - BlackZero Tolerance HOLD ME TIGHT is a heated, thrusting stroker featuring 10 vibration speeds, 7 thrust speeds, and a channel that warms to 40–42°C. At 35 cm tall with 13.3 cm insertable length and 5.7 cm diameter, it includes gaming-style handles, a phone mount...

- $289.99

$299.99- $289.99

- Unit price

- per

-

Lovense Solace Automatic Thrusting Male MasturbatorLovense Solace Automatic Thrusting Male Masturbator - Effortless Pleasure Engineered Lovense Solace Automatic Thrusting Male Masturbator revolutionizes solo experiences with its advanced mechanical system that delivers powerful, consistent strokes without manual effort. This sophisticated device combines precision engineering with intuitive app control, creating a...

- $309.99

$374.99- $309.99

- Unit price

- per

-

Autoblow A.I. Sleeve Anal - BrownAutoblow A.I. Sleeve Anal - Premium Silicone Pleasure Enhancement The Autoblow A.I. Sleeve Anal represents a breakthrough in intimate pleasure technology, crafted specifically for discerning adults who appreciate premium materials and thoughtful design. This specialized sleeve, made from 100% premium silicone, delivers an incredibly...

- $74.99

$104.99- $74.99

- Unit price

- per

-

Autoblow AI UltraAutoblow AI Ultra: Where Artificial Intelligence Meets Intimate Pleasure The Autoblow AI Ultra represents a revolutionary leap forward in intimate pleasure technology, combining cutting-edge artificial intelligence with sophisticated engineering to deliver an unparalleled experience. This masterfully crafted device brings together innovation and satisfaction in...

- $324.99

$369.99- $324.99

- Unit price

- per

-

Hypershaker Automatic App Controlled LCD Masturbator - BlueThe Hypershaker is an automatic app-controlled masturbator featuring 4 vibrating bullets, 9 suction modes, and a realistic silicone sleeve with tongue textures. At 225.9mm tall with a 110mm insertable length, it delivers deep throat suction combined with surround vibration. The global app supports up...

- $119.99

$129.99- $119.99

- Unit price

- per

-

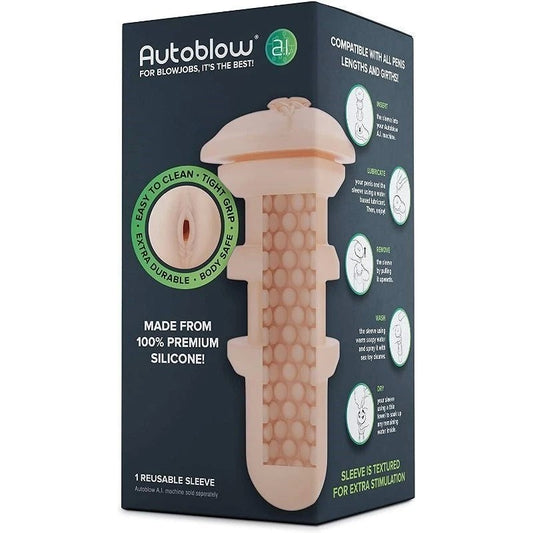

Autoblow A.I. Sleeve Vagina - BrownAutoblow A.I. Sleeve Vagina - Advanced Pleasure Technology The Autoblow A.I. Sleeve Vagina represents the pinnacle of intimate pleasure technology, crafted specifically for the discerning adult seeking premium stimulation. This sophisticated sleeve, developed through extensive research and material innovation, delivers an extraordinarily lifelike sensation...

- $79.99

$99.99- $79.99

- Unit price

- per

-

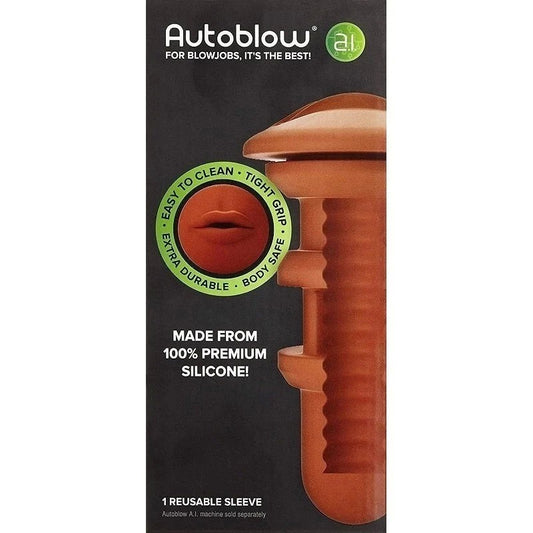

Autoblow A.I. Sleeve Mouth - BrownAutoblow A.I. Sleeve Mouth - Premium Silicone Enhancement Accessory The Autoblow A.I. Sleeve Mouth represents a breakthrough in pleasure technology, crafted specifically for discerning adults seeking to expand their intimate experiences. This premium silicone sleeve transforms your Autoblow A.I. device into an entirely new...

- From $74.99

$99.99- From $74.99

- Unit price

- per

-

AUTOBLOW A.I. PLUS MACHINE (INCLUDES 1 MOUTH SLEEVE)AUTOBLOW A.I. PLUS MACHINE - The Future of Pleasure Technology The AUTOBLOW A.I. PLUS MACHINE represents a groundbreaking advancement in pleasure technology, combining artificial intelligence with precise engineering to deliver an extraordinary intimate experience. This revolutionary device features a realistic mouth sleeve and sophisticated...

- $424.99

$549.99- $424.99

- Unit price

- per

AI Masturbators FAQ

Do AI masturbators require multiple sessions before generating accurate personalized patterns?

Learning algorithms need 5-15 sessions collecting sufficient data identifying consistent preferences before optimization accuracy improves noticeably. Early sessions may feel generic as systems gather baseline information, with personalization quality increasing through continued use.

Do AI algorithms ever recommend intensity levels unsafe for extended use?

Responsible systems incorporate safety constraints preventing pattern generation exceeding established intensity thresholds regardless of detected preferences. However, users should monitor for discomfort as algorithms cannot detect all individual tolerance variations, requiring manual intervention if automated patterns prove too aggressive.

Can pattern recognition algorithms distinguish between different arousal states across sessions?

Advanced systems detect variation in engagement levels, adapting patterns for quick sessions versus extended encounters based on sensor data indicating different usage contexts. Basic models may lack contextual awareness, applying learned preferences uniformly regardless of current session intent.

How does predictive pattern generation avoid creating unwanted stimulation combinations?

Algorithms constrain new pattern creation within boundaries established by detected preferences, testing variations incrementally rather than generating random combinations. User feedback ratings help systems identify unsuccessful predictions, preventing repetition of disliked generated patterns.

Do real-time adaptive features respond faster than users can consciously recognize arousal changes?

Sensor analysis detects physiological indicators like grip pressure changes or movement acceleration before conscious awareness emerges, enabling preemptive adjustment. The anticipatory response creates seamless intensity management users may not consciously attribute to automated adaptation.

Can cloud-connected AI masturbators improve faster than local-processing models?

Cloud systems access broader datasets and more powerful algorithms than onboard processors support, potentially accelerating learning through shared anonymized usage patterns. However, privacy trade-offs and connectivity requirements may outweigh speed advantages for some users.

How do AI systems handle contradictory preference signals across different sessions?

Advanced algorithms weight recent data more heavily while maintaining historical context, allowing preference evolution detection rather than treating contradictions as errors. The systems identify shifting preferences versus random variation through statistical analysis of usage patterns over time.

Do AI masturbators retain learned preferences indefinitely or require periodic retraining?

Most systems maintain preference profiles until explicitly reset, though some incorporate preference drift detection updating models when usage patterns shift consistently. Storage duration varies by manufacturer, with some cloud systems retaining data across device replacements while local models reset with new hardware.

Can multiple users share single AI devices without preference profile conflicts?

Devices supporting multiple app profiles enable separate learning for different users, preventing preference mixing. Single-profile systems lack user differentiation, averaging preferences if shared across multiple individuals with conflicting stimulation preferences.

How does sensor calibration affect AI learning accuracy over device lifespan?

Sensor degradation or drift can distort data collection, reducing learning effectiveness as measurements become less accurate. Quality devices incorporate calibration routines maintaining sensor precision, while budget models may experience declining AI performance as hardware ages without recalibration capability.

Recently Viewed Products

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Example product title

- $19.99

- $19.99

- Unit price

- per

Why Shop With Adultsmart?

- Choosing a selection results in a full page refresh.